Types of server hardware

Server hardware comes in three forms: rack servers, blade servers, and mainframe servers.

1. Rack servers

Rack-mounted servers are general purpose machines which (as the name suggests) are mounted in a server rack alongside other rack servers and/or network and storage devices. Rack servers are about the size of a standard computer so take up minimal space and can support a broad range of workloads. They’re easy to replace and upgrade with additional components and it’s not necessary for all rack servers within the same rack to be of the same model. Cables for power, networking, and storage are attached to the back of the stack.

2. Blade servers

Blade servers are high-performance servers tightly configured within a chassis. They take up even less space than rack servers. Modular components (blades) fit into a chassis alongside other blades. Each individual blade is a server with its own processor, memory, and network controllers. The chassis provides consolidated power, cooling and networking shared across all the blade servers within the chassis and drives are hot-swappable (meaning they can be replaced without shutting down the system). Information, errors, and/or faults can be managed from a central console, but some management tasks may need to be performed on the physical server.

3. Mainframe servers

Mainframes are large, high-performance computers. Today’s mainframes are much more compact than older models (which could easily take up an entire room). But they’re still significantly bulkier than rack servers or blades. Mainframes are designed for their power and high throughput. They support high volume, data-intensive workloads and large-scale simultaneous transactions. They are also highly configurable with hot-swappable hardware and their system-wide layers of server redundancy make mainframes extremely reliable.

Types of server software

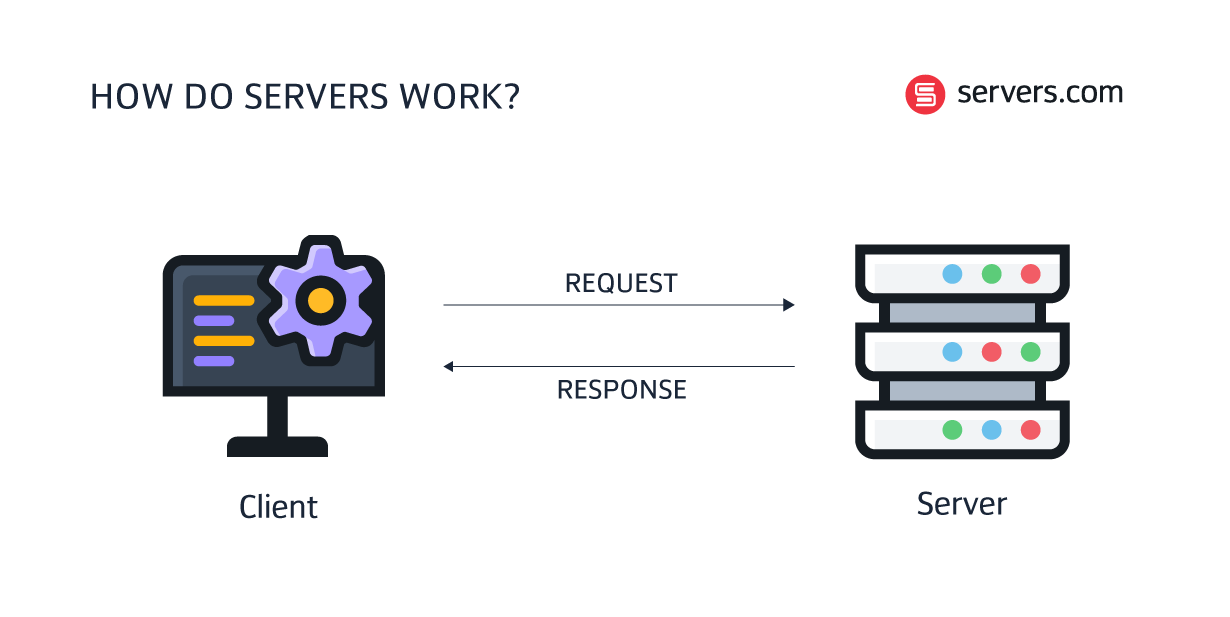

The three server types discussed above are all examples of server hardware – the physical devices that store and run applications. But the hardware cannot do anything without software. Server hardware and server software work together as a system. And the type of server software installed on the server hardware dictates its operational capacity.

It can be a bit confusing, because what people often refer to as different ‘types of servers’ are actually just different combinations of server hardware and server software.

Here are some examples:

1. Web servers

A web server is a physical server running web server software. Web servers make web pages accessible online. The software component controls how users access hosted files and the hardware component stores the web server software.

Web servers deliver static web content over Hypertext Transfer Protocol (HTTP). When a user types a URL into a search engine, the web server retrieves the relevant hosted files and sends them to the browser.

2. Application servers

An application server is a physical server running an application software (application instance). Application servers facilitate access to business applications and the performance of said applications.

Like web servers, application servers can deliver web content, but their primary function is to enable interactions between the client and the server-side application code (or business logic). Application servers generate and deliver dynamic content - for instance transactions, decisions, and real-time analytics.

3. Game servers

A game server is a physical server running an application software (application instance) which runs the server side of a multiplayer game. They’re typically deployed on dedicated server infrastructure and are designed to support smooth gaming experiences for players by reducing latency and lag.

Game servers act as the communication link between players in a multiplayer game, sending and receiving data, such as player locations, scores and game assets, between players constantly.

4. Database servers

A database server is a physical server running a database software (a database instance). The primary function of a database server is to receive client requests then search for and retrieve the relevant data. That could be anything from financial transactions to analytics processing.

There are many different types of database software – each best suited to different use cases. For instance, relational databases are great for tracking inventories and ecommerce transactions whilst non-relational databases are suited to complex data.

Server components

A fully operational server consists of various hardware and software components.

Hardware components include:

Motherboard

The motherboard is the main circuit board in a computer. Its job is to aggregate all the server’s components in one place and enable communication between them.

Components like the chipset, PCIe slots, RDIMM (registered memory module) sockets, and CPU. Compared to a standard computer, server motherboards typically have two processors, multiple cores and, as a result, more threads for data processing.

Central Processing Unit (CPU)

The CPU is the brain of a computer. It’s responsible for processing inputs, storing data, and outputting results. Server CPUs are much more powerful than the processors found in an average PC. They must be capable of supporting complex workloads– everything from database transactions and email exchanges to network traffic routing and complex compute tasks.

The maximum capacity of any given server is defined by the CPU. The more cores in your server the more the CPU can compute multiple clusters of data simultaneously. However, the more cores typically the lower the performance of each core. So, it’s important to strike the right balance between scale and performance.

Random Access Memory (RAM)

RAM is a computer’s short-term memory. It temporarily stores data from the application running on a server. This allows the CPU to process data faster than if it had to access the data directly.

RAM takes the form of a stick (known as a DIMM) inserted into the motherboard. Various generations of RAM are available. Recent generations include DDR3, DDR4, and DDR5 and each generation comes with more advanced features and performance capabilities. It’s worth noting that not all RAM generations are compatible with every motherboard – for example, a DDR3 won’t work in a motherboard that supports DDR4.

There are two types of RAM memory. RAM memory with error correction code (ECC memory) and RAM memory without error correction code (non-ECC memory). Most servers use ECC memory because it comes with an additional memory chip which helps prevent data corruption by automatically detecting and correcting memory errors. Conversely, non-ECC memory is typically used within consumer-grade laptops and desktop computers.

Power Supply Unit (PSU)

A server’s PSU doesn’t directly supply the server with power but receives power from an electrical outlet and regulates that power in various ways depending on the server model. For example, the PSU might convert the power to the correct voltage to run the system or convert the power from alternating current (AC) to direct current (DC).

The PSU is critical to the functioning of the server so redundancy is an important consideration. A server can have one or multiple PSUs. One PSU will be capable of supplying enough power under normal conditions but, in the event of a PSU failure, the server will be left without power. Adding additional PSU modules ensures redundancy. If one module fails, there will be a second (or third) on standby to take over.

Graphics Processing Unit (GPU)

The GPU is a type of processor that accelerates graphics and video rendering by processing many pieces of data simultaneously. GPUs can be integrated into a server’s CPU but may also come as a separate hardware unit.

GPUs are best known for their use within gaming (they were originally designed to speed up the rendering of 3D graphics) but as the technology has matured, GPU capabilities have become increasingly advanced. These days GPUs have a wide range of use cases including AI, deep learning, and high-performance computing.

Storage drives

Most servers need at least one form of direct-attached storage. The two main types of storage drive available are:

Hard-Disk Drives (HDD): An HDD is a piece of server hardware comprising multiple stacked disks around a central spindle. The HDD stores data, including the OS, files, and applications - even in the absence of a power supply. HDDs are made up of two central elements – a magnetic platter to retain the data and an actuator arm to read and write data.

Solid-State Drives (SDD): SSDs use non-volatile rewritable memory in a standard disk drive interface. SSDs have no moving parts making them much faster than hard disks and ideal for data-intensive workloads.

Peripheral Component Interconnect Express (PCIe): PCIe is a standardized interface for motherboard components such as memory, storage, and graphics. It facilitates point-to-point connections for non-core components. The PCIe ‘slot’ is the point of connection between these components and the motherboard.

Non-Volatile Memory Express (NVMe): NVMe is a transfer protocol for SSD and the standardized interface for PCIe SSDs. It provides a super-fast way to access non-volatile memory (like flash and solid-state devices).

Network

All servers need at least one network connection. And this is created through a network adapter. The network adapter is a physical port on the motherboard that enables the server to communicate over a local area network (LAN) whilst connecting with other devices and the internet.

Chassis (case)

The chassis is a metal case in which a server is housed. Chassis are designed to save space by fitting multiple servers within a single physical structure. The type of chassis is defined by the type of server (rack, blade, or mainframe). For instance, a rackmount server chassis is simply a case designed for mounting a server in a rack.

Software components

When it comes to software, all servers will have a minimum of two software components: an OS and a software application. The computer software application carries out tasks on behalf of the client. It’s these applications that turn the standalone server hardware into a web server, database server, game server, etc. The OS then acts as an interface between the server hardware and the server application.

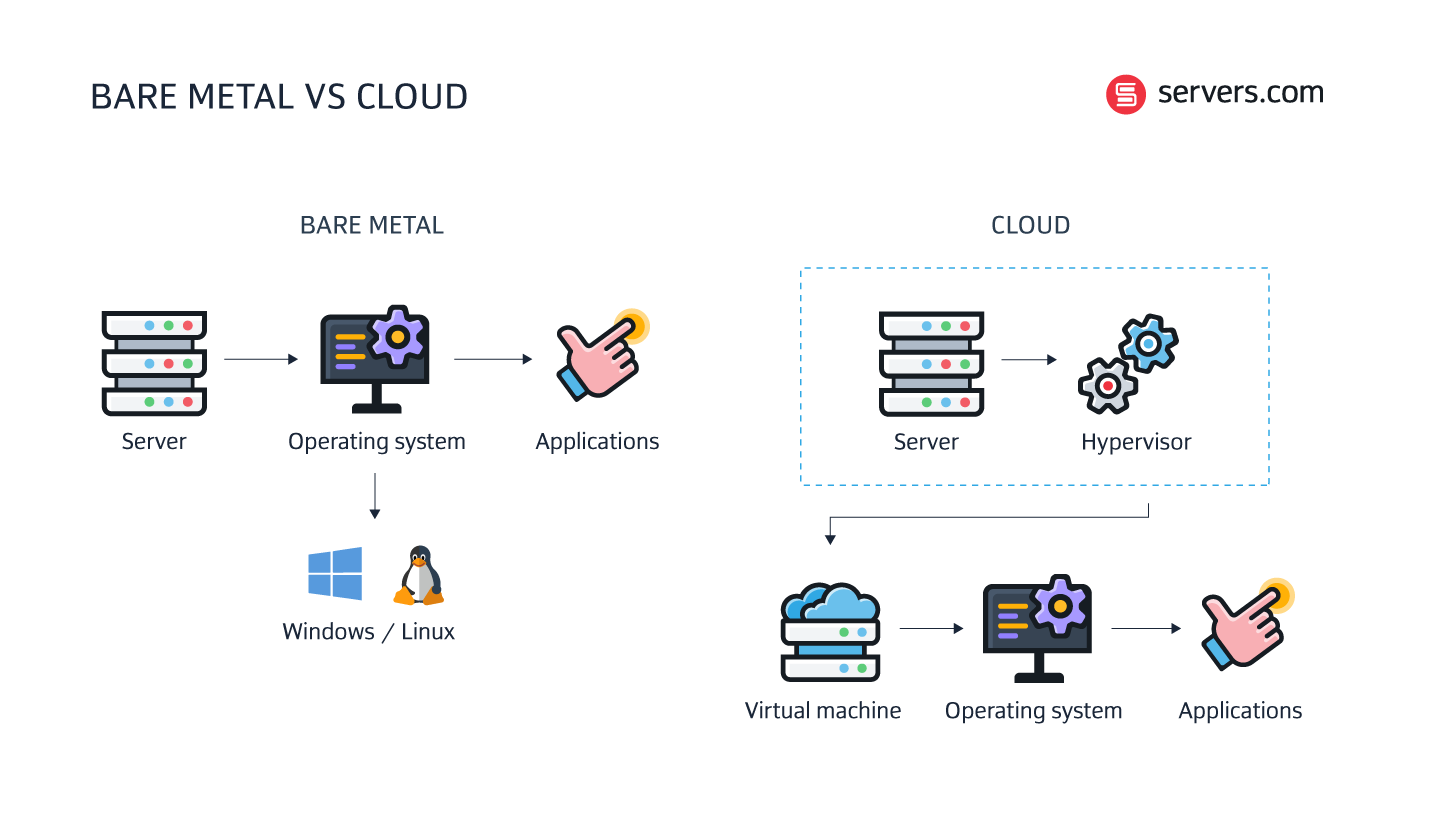

Types of server hosting – bare metal vs cloud servers

It’s important to distinguish the different types of servers from the different types of server hosting. Server hosting is typically divided into two camps – bare metal hosting and cloud hosting. But both ultimately rely on physical machines in a data center. The distinction comes down to how the server components are structured, and the business model.

1. Bare metal servers

Dedicated servers (or bare metal servers) are single-tenanted physical machines that sit within a data center or rack. Businesses using bare metal servers have exclusive access to the entire machine and its resources. In a bare metal environment, the OS is installed directly on the server.

![What is a server? [A complete guide to types, components, and more]](/dA/9c2ae57656/image/Article.png)

![How does dedicated server hosting work? [+ comparison with shared hosting]](/dA/85652c2a1f/image/Preview.png)