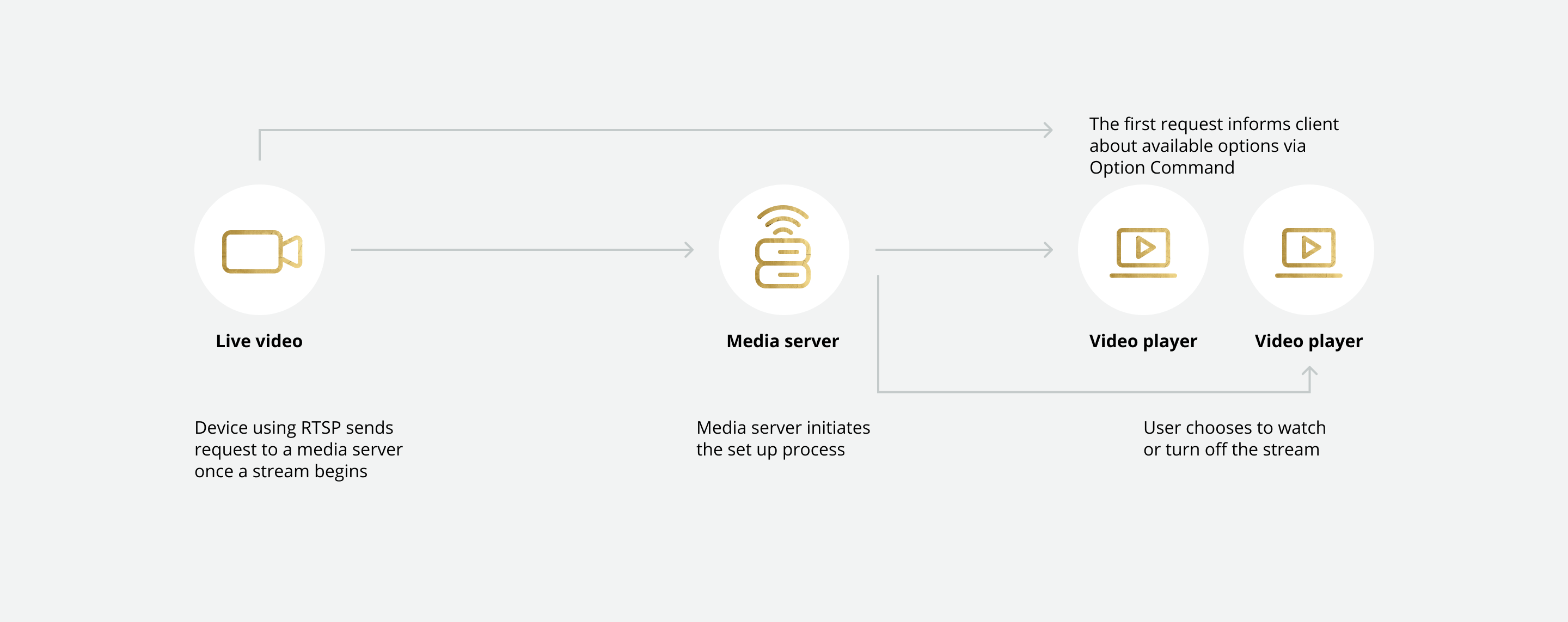

After being published in 1998, RTSP quickly became the leading protocol for audio and video streaming. And though it has since been eclipsed by HTTP-based technologies, it is still widely adopted across use cases including IP cameras, robotics, and IoT devices due to its simple design, compatibility across devices, and low latency.

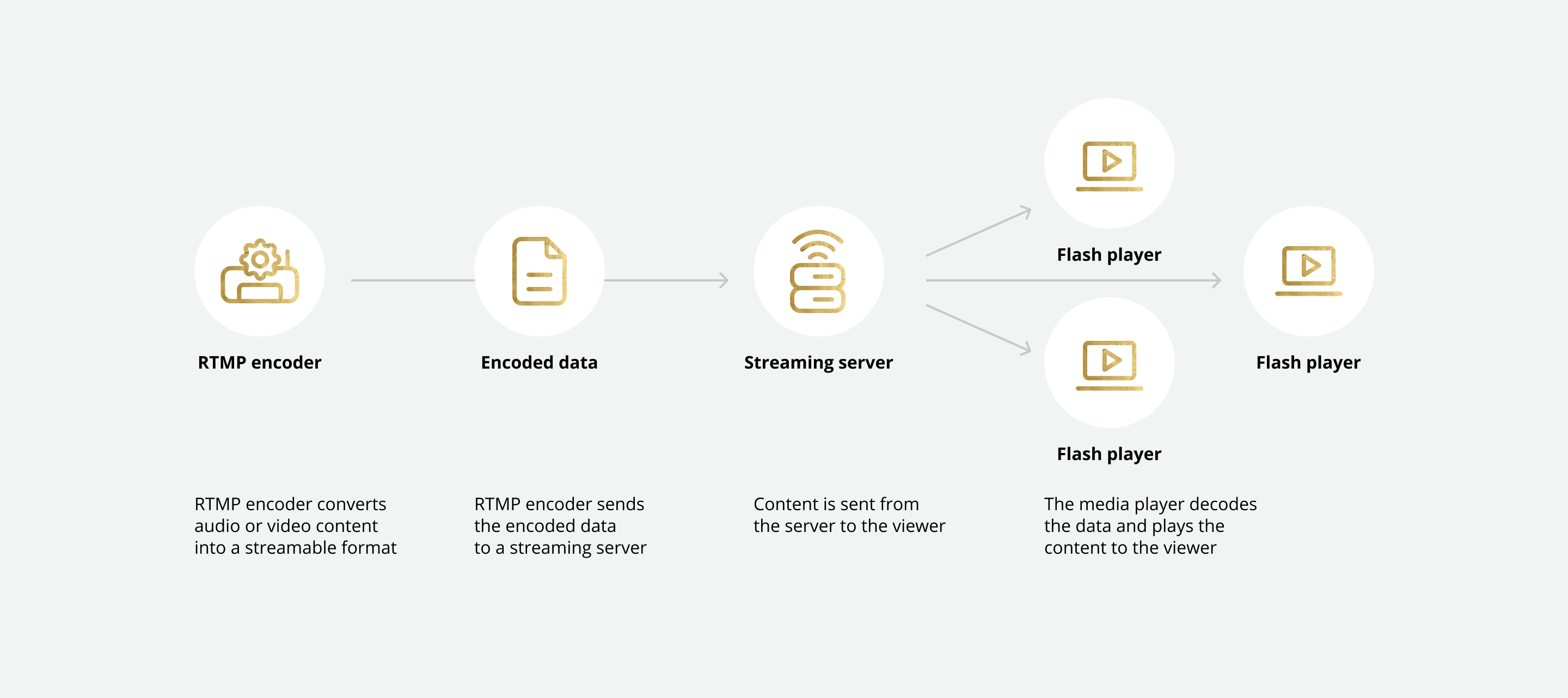

RTMP and RTSP were leading protocols of their time but have been largely replaced by HTTP-based and adaptive bitrate streaming technologies which are easier to scale to the demands of large broadcasts.

What is HTTP?

HTTP stands for Hyper Text Transfer Protocol. HTTP is a method for encoding and transferring information between a client and a web server and is the principal protocol for the transmission of information over the internet.

HTTP streaming involves push-style data transfer where a web server continuously sends data to a client over an open HTTP connection.

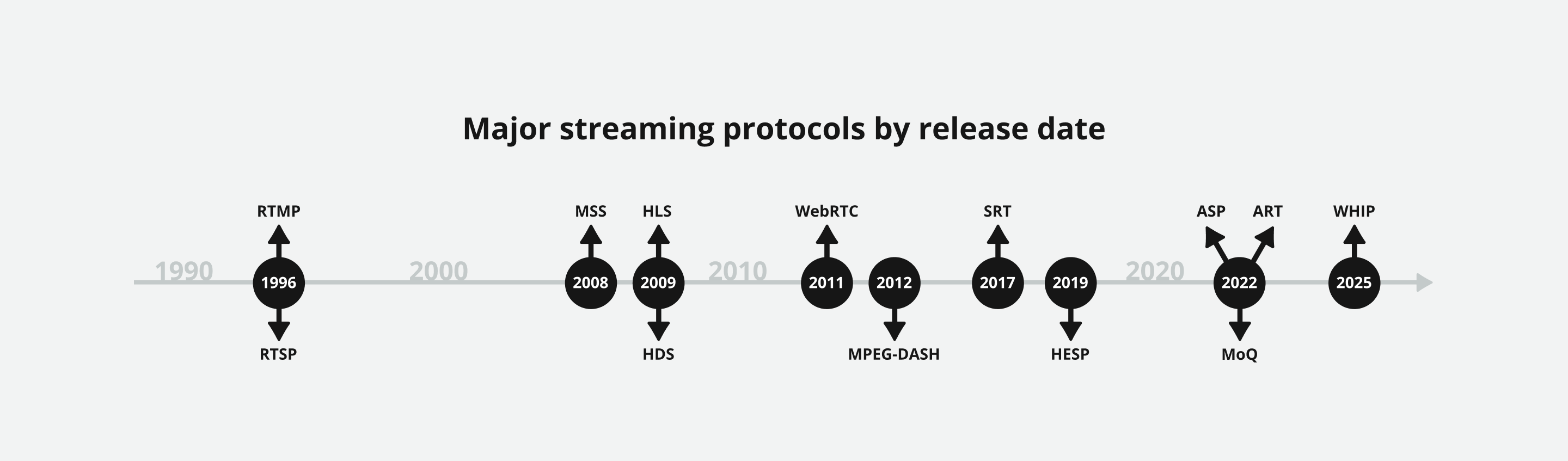

HTTP 1.0 was first introduced in 1996, with subsequent updates HTTP 1.1, 2, and 3 brought out in 1997, 2015, and 2022, respectively.

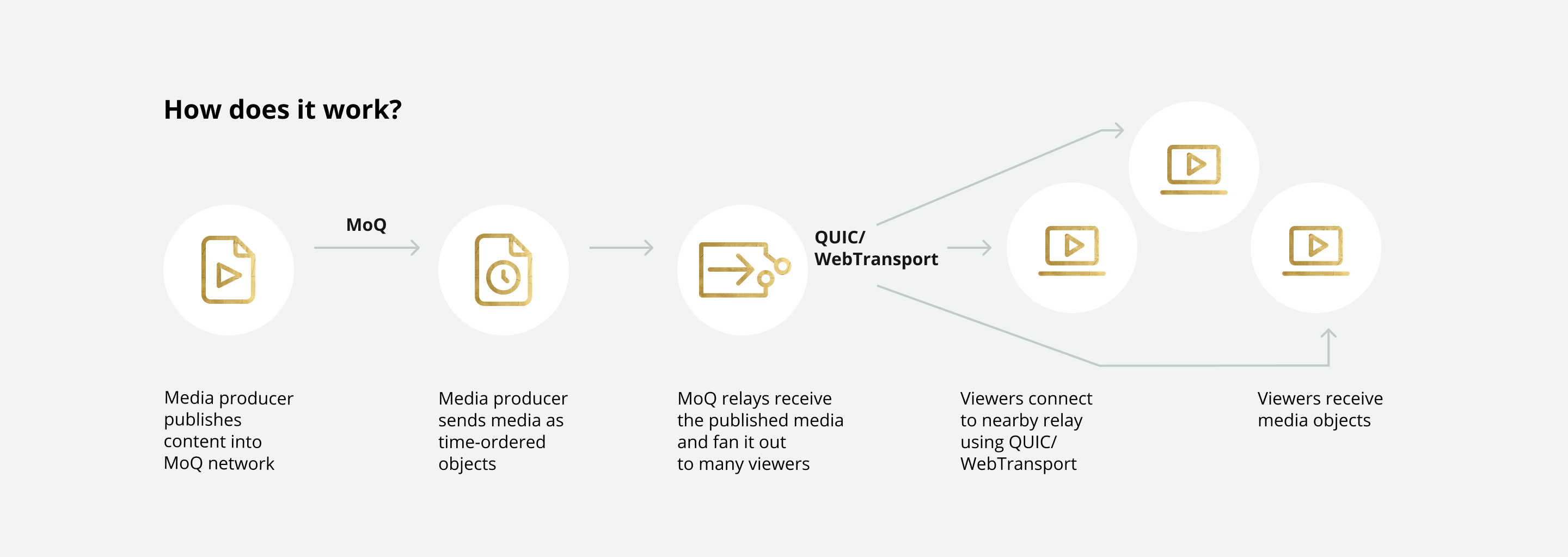

HTTP/3 remains the most recent update with no HTTP/4 on the horizon in 2026. This is, in large, due to the parallel development of several new, advanced technologies including Media over QUIC (MoQ).

What is adaptive bitrate streaming?

First created in 2002, adaptive bitrate streaming (ABS) is a technology for improving HTTP streaming.

ABS dynamically adjusts the compression level and video quality of streams to match bandwidth availability so that streams can be delivered efficiently and at the highest usable quality.

The 00s - HLS, HDS, and MSS

At the turn of the millennium live streaming and VoD started gaining even more traction. This was the decade that some of the biggest names in the industry were born. YouTube hit the scene in 2005, followed by Twitch (then justin.tv) in 2006, and Netflix in 2007. As access to the internet and mobile technology widened, internet video streaming hit the masses.

In 2005, Adobe acquired Macromedia and Adobe Flash fast became a mainstay of video streaming. But in 2010, three years after the iconic release of the first iPhone, Steve Jobs announced that Apple would cease to support the platform.

In his open letter, Thoughts on Flash, Jobs writes:

“I wanted to jot down some of our thoughts on Adobe’s Flash products so that customers and critics may better understand why we do not allow Flash on iPhones, iPods and iPads. Adobe has characterized our decision as being primarily business driven – they say we want to protect our App Store – but in reality it is based on technology issues. Adobe claims that we are a closed system, and that Flash is open, but in fact the opposite is true.”

Needless to say, Apple had been working on its own proprietary format - HLS.

HTTP Live Streaming (HLS)

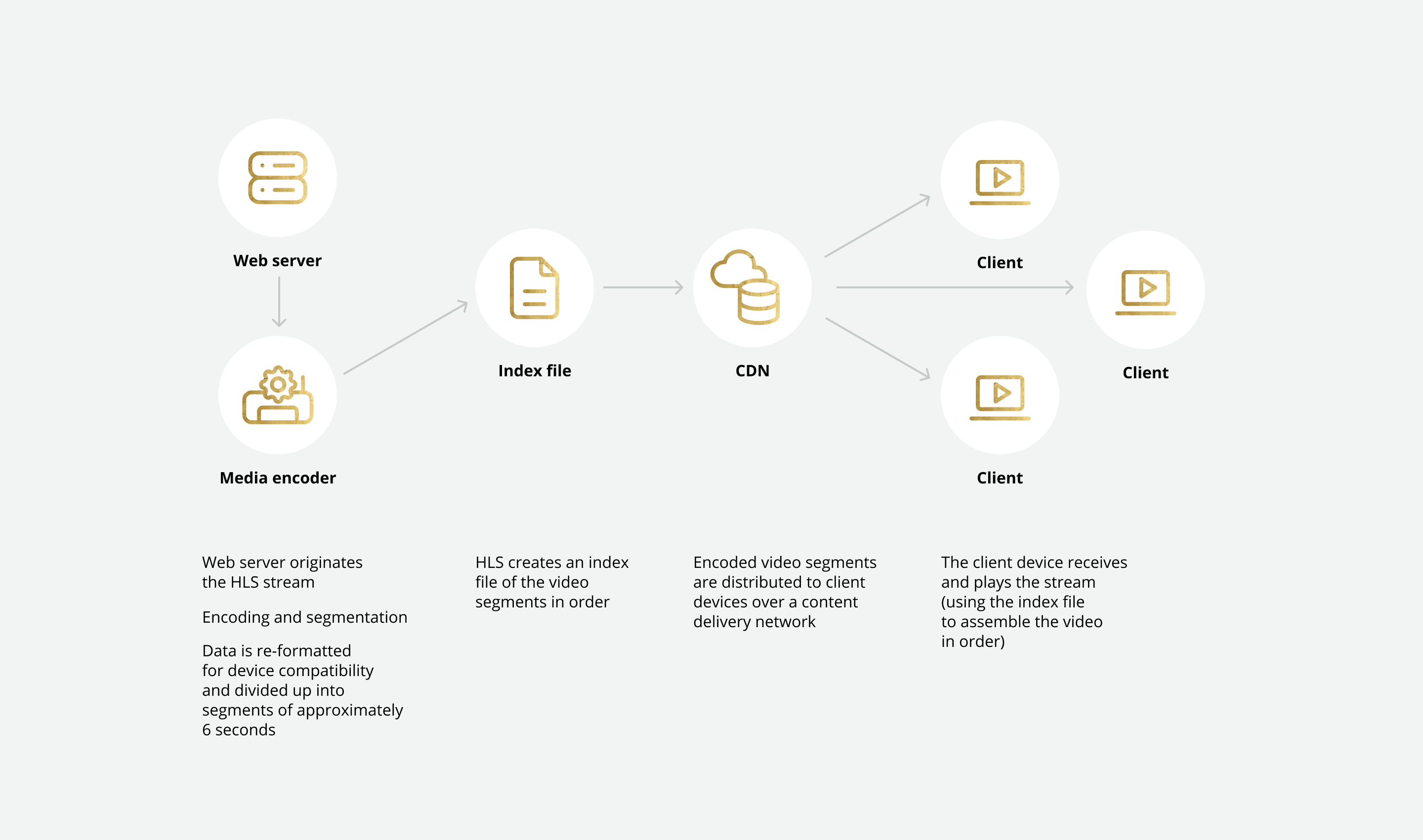

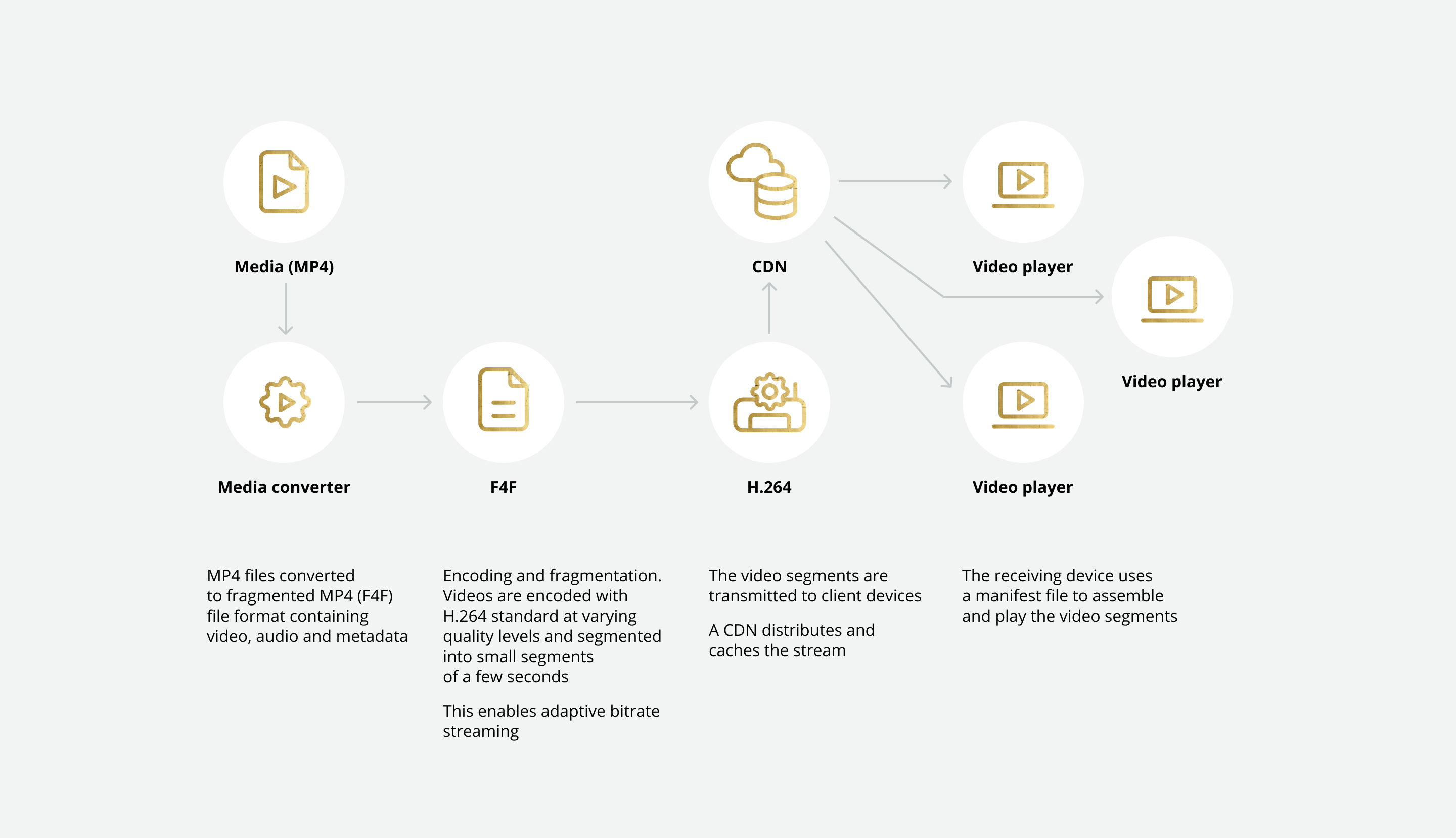

HLS is an adaptive HTTP-based streaming protocol that was first released in 2009. HLS breaks video and audio data into chunks which are compressed and transmitted over HTTP to the viewer’s device. The protocol was initially only supported by iOS but has since become widely supported across different browsers and devices. When Adobe Flash was phased out in 2020, HLS became the streaming protocol of choice.

How does HLS work?